ChatGPT for Doctors: How to Use AI in Your Practice in 2026

A practical guide to using ChatGPT and purpose-built clinical AI tools in solo and group practices, with a clear breakdown of what each tool actually does.

Written by the Commure Scribe Team

Published: April 10, 2026

•

9 min read

What You Need to Know

- Physicians use ChatGPT and other AI tools to draft notes, write prior auth letters, create patient education materials, and cut admin time.

- General ChatGPT works for de-identified writing tasks but is not HIPAA-compliant out of the box and does not capture live visits. Purpose-built clinical AI tools handle those gaps.

- This guide covers what each tool does and where each fits.

Physicians across solo and group practices have been experimenting with ChatGPT since late 2022. The use cases were immediate and practical: prior auth letters drafted at midnight, patient-friendly discharge summaries, differential lists on tough cases.

The landscape has grown since then. General-purpose ChatGPT is one option. Clinical AI tools built specifically for medicine are another. Each solves a different problem. This guide covers how to use ChatGPT in practice, where it falls short, and what purpose-built tools handle that general AI cannot.

What Is ChatGPT, and How Are Doctors Using It?

ChatGPT is a large language model (LLM) built by OpenAI. It generates text from patterns learned in a large training corpus. Give it a prompt and it produces a response based on those patterns.

Doctors use it as a writing and reasoning assistant. It does not connect to your EHR, does not listen to visits, and does not have access to your patient's records. Its value is in generating well-structured text from inputs you provide.

"ChatGPT for doctors" has also become shorthand for a broader category of clinical AI. Three distinct tools now carry that label:

- General-purpose LLMs (ChatGPT, Claude, Gemini): writing tasks, summaries, admin work, de-identified drafting.

- Clinical decision support AI (OpenEvidence and similar): evidence lookups, differential support, guideline search. Trained on medical literature, not general web data.

- Ambient documentation AI (Commure Scribe and similar): real-time visit capture, structured note generation, EHR integration.

Each solves a different job. Most of what physicians search for under "ChatGPT for doctors" falls into the first category. The rest of this guide focuses there.

What Can Doctors Use ChatGPT For?

General-purpose LLMs handle tasks that are primarily linguistic. The physician does the clinical work. The AI handles the writing. In practice, those tasks include:

Note drafting. Paste a de-identified visit summary into ChatGPT and ask for a SOAP note. The output is often well-structured and cuts writing time. Review and edit before it enters the record. Hallucination rates make physician review essential.

Prior auth letters. AI handles the structure of a prior auth appeal well: clinical summary, medical necessity argument, supporting criteria. A 20-minute letter can be drafted in under 5 minutes.

Patient education materials. ChatGPT handles plain-language explanations well: new diagnoses, post-procedure care, drug side effects. Paste a clinical summary and ask for a patient-facing version at an 8th-grade reading level.

Differential brainstorming. Enter a clinical presentation and ask for a differential list. This surfaces diagnoses worth considering. It works as a checklist prompt, not a diagnostic engine.¹

CME prep and education. Residents and attendings use LLMs to generate practice questions, explain mechanisms, and summarize guidelines. Research in education contexts is generally positive.²

Admin writing. Referral letters, payer inquiry drafts, internal protocols, and staff memos all save time here. No clinical risk is involved.

These tasks share one trait: none capture a live patient visit. The physician does the clinical work. The AI helps with the writing afterward.

What Are the Limitations of ChatGPT in Clinical Practice?

Hallucination. LLMs produce text that sounds right but is sometimes factually wrong. A 2023 PMC study found ChatGPT fabricates citations and misquotes drug dosages.³ It also makes confident errors in clinical reasoning tasks. Physician review catches these errors. No current LLM has solved this problem.

Outdated knowledge. ChatGPT has a training cutoff. Guidelines change. Drug recalls happen. The model has no knowledge of evidence published after its training date. Verify outputs against live sources for current treatment standards.

No patient context. ChatGPT has no access to patient history, problem lists, or medications. It cannot pull from your EHR. Paste that data into the prompt, or the model works blind. This limits its value to text generation tasks only.

Weak performance on complex cases. ChatGPT handles board-style questions well. Complex cases with conflicting findings or judgment under uncertainty expose its limits.⁴ Simple presentations get more reliable suggestions than complex ones. Decision support is most valuable exactly where performance is weakest.

No learning across sessions. ChatGPT does not adapt from corrections. Fix an incorrect note structure in the prompt. It will not carry that fix to the next session.

Is ChatGPT HIPAA-Compliant for Clinical Use?

No, standard ChatGPT is not HIPAA compliant for clinical use.

Standard ChatGPT does not include a Business Associate Agreement (BAA). HIPAA requires any vendor handling protected health information (PHI) for a covered entity to sign a BAA. IUf you enter patient identifiers, dates of service, or diagnoses into a standard ChatGPT session that creates a HIPAA risk.

OpenAI does offers enterprise contracts with a BAA. ChatGPT for Healthcare is the enterprise version meant for organizations with large amounts of users. It also blocks conversations from model training.⁵ Pricing is not publicly available and inquiries would have to go through OpenAI’s sales team.

Three categories of ChatGPT use by compliance risk:

- No PHI tasks: Admin writing, staff training materials, guideline summaries, patient education templates with no patient-specific data. No HIPAA exposure. ChatGPT works here without compliance concerns.

- De-identified inputs: Note structures drafted from a clinical scenario stripped of all 18 HIPAA identifiers. Compliant, but requires a consistent de-identification process and reduces utility because the AI lacks patient context.

- PHI tasks: Any task where patient-specific data enters the prompt. Requires a HIPAA-compliant platform with a signed BAA. Standard consumer ChatGPT is not that platform.

Practices that want AI inside their clinical workflow with real patient context need a purpose-built tool.

How Does ChatGPT Compare to Clinical AI Tools Built for Doctors?

General ChatGPT is a generalist. Purpose-built clinical AI tools train on medical data, integrate with clinical workflows, and carry compliance infrastructure by design. Three categories now compete for the "ChatGPT for doctors" label:

General-purpose LLMs (ChatGPT, Claude, Gemini). Best for de-identified writing tasks: note drafting from summaries, prior auth letters, patient education, admin writing. No HIPAA cover by default. No real-time visit capture. No EHR connection.

Clinical decision support AI (OpenEvidence and similar). OpenEvidence is the tool most media outlets call "ChatGPT for doctors." It trains exclusively on peer-reviewed journals and guidelines, cites every claim, and withholds answers when uncertain. It has a large and growing base of verified physician users.⁶ It handles evidence lookups, differential support, and guideline search.

Ambient documentation AI (Commure Scribe and similar). These tools listen to a live physician-patient conversation and produce a structured clinical note from the audio. The clinician does not type, paste, or prompt. HIPAA-compliant by design. EHR integration built in. These tolls solve the documentation burden clinicians face today.

The practical split: use general ChatGPT for de-identified writing tasks. Use OpenEvidence for clinical questions. Use an ambient documentation tool for notes.

What Is Ambient Documentation AI, and How Does It Differ from ChatGPT?

Ambient tools listen to a physician-patient conversation and produce a clinical note from the audio. The clinician does not type, paste, or prompt. The tool captures the visit as it happens.

Ambient AI scribes use LLMs as a core component. The surrounding setup is not the same as ChatGPT:

- Real-time capture. The tool listens during the visit and processes audio through medical language models. The note comes from the conversation, not from a post-visit summary.

- EHR connection. Clinical-grade tools connect directly to EHR systems. Notes land in the record without copy-paste. Enterprise tiers use bidirectional API sync. Copy-paste is available at lower tiers.

- HIPAA by design. Ambient scribe platforms include BAAs, compliant data storage, and documented audio retention policies. Audio handling varies by vendor. Confirm the policy before you sign.

- Structured output. Notes come out as SOAP, DAP, or specialty templates. The tool suggests ICD-10 and CPT codes. The clinician reviews and approves. Review time per note is brief.

The workflow difference:

General ChatGPT: see patient, write notes or dictate, paste de-identified summary, prompt LLM, paste output into EHR, edit.

Ambient AI scribe: see patient (tool runs in background), click End Recording, review and approve the note in the EHR.

The clinician stays present in the room. Note-taking runs in the background.

What Does Research Say About AI Documentation Tools in Practice?

The evidence base has grown since 2023, but it is not uniform.

A 63-week study from the Permanente Medical Group covered 7,260 physicians and 2.5 million encounters. It found charting time savings of 15,791 hours across the cohort. High users saved 2.5 times more time per note than low users. Physician age showed no link to adoption rates.⁸

A 2025 UCLA study found charting time as a share of total visit time fell by roughly half in the AI group. A separate AMA analysis found primary care physicians spend an average of 36.2 minutes on EHR tasks per 30-minute visit.⁹ After-hours EHR time has gotten worse year over year. Headline burnout rates have slightly improved in that period, but the documentation burden has not.

Not all studies are positive. A Cleveland Clinic study of high-burden primary care physicians found one significant change from AI scribe use: fewer characters typed. Same-day chart closure fell 3%. After-hours work increased, though not significantly. Mean likelihood to recommend scored 6.5 out of 10.¹⁰

Note bloat is also documented. Some physicians produce longer notes with AI. The tool captures detail that manual notes skip. Longer notes are not better notes. Payers have flagged upcoding concerns in some cases.

The evidence is strong in direction but mostly comes from large health systems. Those systems carry IT support most practices do not have. Run your own trial on your own visit volume. Do not project system-level numbers onto your practice.

What Should Doctors Look for When Evaluating Clinical AI?

Practices evaluating tools hit the same decision points. Work through these first:

- BAA availability. Confirm a BAA is included at the tier you are buying.

- Audio handling. Audio from a patient visit is PHI. Ask how the tool stores, encrypts, and retains recordings. Policies vary significantly across vendors. Get the specifics in writing.

- EHR connection. Copy-paste works at all practice sizes. API sync is available from most vendors at enterprise tiers. On Athenahealth, eClinicalWorks, or DrChrono, confirm named support. Do not rely on "works with most EHRs" language.

- Specialty support. Note templates vary by specialty. Confirm the tool has templates for your visit type or offers customization options.

- Review step. Confirm there is an approval step before the note is finalized. No tool should push notes to the chart without clinician review.

- Evaluation period. Some tools offer an ongoing free tier with volume limits. Others offer a 7-day expiring trial. A 7-day trial gives you enough visits to judge note quality for your specialty.

Commure Scribe: How It Works for Solo and Group Practices

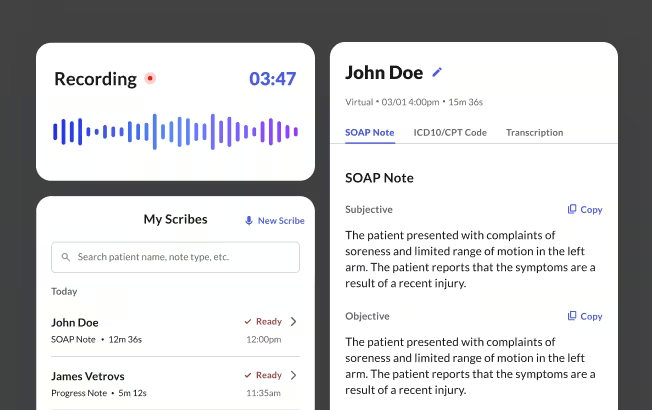

Commure Scribe is an ambient documentation tool for solo clinicians and group practices. It captures the physician-patient conversation and produces a structured SOAP note in seconds. The clinician reviews and approves before the note enters the record.

Workflow. Open the app on a phone, tablet, or desktop. Click Start Recording at the top of the visit. At the end, click End Recording. A structured SOAP note appears within seconds, along with suggested ICD-10 and CPT codes. Review, edit if needed, and finalize. Copy-paste and one-click EHR sync are available.

Accuracy and languages. Commure Scribe supports 90+ languages with automatic detection. Notes come out in English. The tool handles background noise, overlapping speech, and accented speakers.

EHR compatibility. Commure Scribe can integrate with 60+ EHRs, including AdvancedMD, Elation Health, WebPT, eClinicalWorks, athenahealth, Practice Fusion, Cerbo, SimplePractice, Tebra, and Kipu Health.

Specialties. Commure Scribe works across a wide range of specialties, including primary care, internal medicine, family medicine, behavioral health, psychiatry, and pediatrics, among others. Templates are customizable across specialty visit types as well.

Data practices. Audio is stored, encrypted, and retained per HIPAA requirements. Notes are stored securely onshore on HIPAA-compliant infrastructure. A BAA is included at all tiers.

Frequently Asked Questions

Yes, with caveats. ChatGPT drafts notes from de-identified summaries. It does not capture live visits and does not connect to your EHR. Keep patient-specific data out of a standard ChatGPT session. No BAA means no compliance cover. Ambient tools handle this by design.

The main risks are hallucination, outdated knowledge, no patient context, and HIPAA compliance gaps. Physician review before content enters the record is the primary safeguard. Purpose-built clinical tools address specific risks: OpenEvidence reduces hallucination for clinical questions, ambient scribes handle HIPAA-compliant documentation.

Three categories cover the landscape. Clinical decision support: OpenEvidence (free for individual clinicians). Ambient documentation: Commure Scribe, Freed AI, Nabla, Heidi Health, Suki, DAX Copilot, Abridge, DeepScribe. General-purpose LLMs: ChatGPT, Claude, Gemini for de-identified writing tasks. Each category solves a different problem.

OpenEvidence is a clinical decision support tool trained on peer-reviewed journals and guidelines. Major media outlets use "ChatGPT for doctors" to describe it. It handles evidence lookups, differential support, and guideline search.

Standard consumer and Plus ChatGPT does not include a BAA. ChatGPT Enterprise offers a BAA for qualifying contracts. No BAA means entering PHI creates HIPAA exposure. Use a tool with a BAA built in for any PHI workflow.

Set the review requirement first: the clinician always has the choice to review the note before it goes to the chart. Run a trial across at least two to three weeks and multiple visit types. Pick one workflow owner to set up templates and answer questions from other providers. Use the onboarding support most vendors include. Medium and larger practices should set a brief written policy on AI use in charting before rollout.

Sources

- JMIR Medical Education. (2023, December 21). Using ChatGPT for Clinical Practice and Medical Education: Cross-Sectional Study. https://mededu.jmir.org/2023/1/e50658/

- ScienceDirect. Using ChatGPT for Clinical Practice and Medical Education. https://www.sciencedirect.com/org/science/article/pii/S2369376223000880

- National Center for Biotechnology Information. (2023, June 27). Utility of ChatGPT in Clinical Practice. PMC. https://pmc.ncbi.nlm.nih.gov/articles/PMC10365580/

- Topaz, M., Peltonen, L.M. & Zhang, Z. Beyond human ears: navigating the uncharted risks of AI scribes in clinical practice. npj Digit. Med. 8, 569 (2025). https://doi.org/10.1038/s41746-025-01895-6

- OpenAI. ChatGPT Enterprise. https://openai.com/enterprise

- HLTH. (2026, January 21). OpenEvidence Doubles Valuation to $12bn as Physician Adoption Accelerates. https://hlth.com/insights/news/openevidence-doubles-valuation-to-12bn-as-physician-adoption-accelerates-2026-01-22

- Reddit r/PMHNP. (2026, February 5). AI in Private Practice. https://www.reddit.com/r/PMHNP/comments/1qwvhor/ai_in_private_practice/

- The Permanente Medical Group / NEJM Catalyst. (2025). AI Scribes Save Physicians Time, Improve Patient Interactions and Work Satisfaction. https://permanente.org/analysis-ai-scribes-save-physicians-time-improve-patient-interactions-and-work-satisfaction/

- American Medical Association. (2024). Doctors Work Fewer Hours, But the EHR Still Follows Them Home. https://www.ama-assn.org/practice-management/physician-health/doctors-work-fewer-hours-ehr-still-follows-them-home

- Alpert JM, Saper R, Boose E, Ruff J, Hopkins K, Gaskins D, Guo N, Schneider JP, Giffi Scibona A, Gutierrez J, Rothberg MB. Evaluating an artificial intelligence scribe for clinical documentation. Digit Health. 2025 Nov 20;11:20552076251395588. https://pmc.ncbi.nlm.nih.gov/articles/PMC12638734/

Try the #1 AI Scribe for Free

No Credit Card Required. Join 20,000 Clinicians.